Network Address Translation

In This Chapter

This chapter provides information about Network Address Translation (NAT) and implementation notes.

Topics in this chapter include:

Terminology

BNG Subscriber — A broader term than the ESM Subscriber, independent of the platform on which the subscriber is instantiated. It includes ESM subscribers on 7750 SR as well as subscribers instantiated on third party BNGs. Some of the NAT functions, such as Subscriber Aware Large Scale NAT44 utilizing standard RADIUS attribute work with subscribers independently of the platform on which they are instantiated.

Deterministic NAT — A mode of operation where mappings between the NAT subscriber and the outside IP address and port range are allocated at the time of configuration. Each subscriber is permanently mapped to an outside IP and a dedicated port block. This dedicated port block is referred to as deterministic port block. Logging is not needed as the reverse mapping can be obtained using a known formula. The subscriber’s ports can be expanded by allocating a dynamic port block in case that all ports in deterministic port block are exhausted. In such case logging for the dynamic port block allocation/de-allocation is required.

Enhanced Subscriber Management (ESM) subscriber — A host or a collection of hosts instantiated in 7750 SR Broadband Network Gateway (BNG). The ESM subscriber represents a household or a business entity for which various services with committed Service Level Agreements (SLA) can be delivered. NAT function is not part of basic ESM functionality.

L2-Aware NAT — In the context of 7750 SR platform combines Enhanced Subscriber Management (ESM) subscriber-id and inside IP address to perform translation into a unique outside IP address and outside port. This is in contrast with classical NAT technique where only inside IP is considered for address translations. Since the subscriber-id alone is sufficient to make the address translation unique, L2-Aware NAT allows many ESM subscribers to share the same inside IP address. The scalability, performance and reliability requirements are the same as in LSN.

Large Scale NAT (LSN) — Refers to a collection of network address translation techniques used in service provider network implemented on a highly scalable, high performance hardware that facilitates various intra and inter-node redundancy mechanisms. The purpose of LSN semantics is to make delineation between high scale and high performance NAT functions found in service provider networks and enterprise NAT that is usually serving much smaller customer base at smaller speeds. The following NAT techniques can be grouped under the LSN name:

- Large Scale NAT44 or Carrier Grade NAT (CGN)

- DS-Lite

- NAT64

Each distinct NAT technique is referred to by its corresponding name (Large Scale NAT44 [or CGN], DS-Lite and NAT64) with the understanding that in the context of 7750 SR platform, they are all part of LSN (and not enterprise based NAT).

Large Scale NAT44 term can be interchangeably used with the term Carrier Grade NAT (CGN) which in its name implies high reliability, high scale and high performance. These are again typical requirements found in service provider (carrier) network.

L2-Aware NAT term refers to a separate category of NAT defined outside of LSN.

NAT RADIUS accounting — Reporting (or logging) of address translation related events (port-block allocation/de-allocation) via RADIUS accounting facility. NAT RADIUS accounting is facilitated via regular RADIUS accounting messages (star/interim-update/stop) as defined in RFC 2866, RADIUS Accounting, with NAT specific VSAs.

NAT RADIUS accounting — Can be interchangeably used with the term NAT RADIUS logging.

NAT Subscriber — in NAT terminology a NAT subscriber is an inside entity whose true identity is hidden from the outside. There are a few types of NAT implementation in 7750 SR and subscribers for each implementation are defined as follows:

- Large Scale NAT44 (or CGN) — The subscriber is an inside IPv4 address.

- L2-Aware NAT — The subscriber is an ESM subscriber which can spawn multiple IPv4 inside addresses.

- DS-Lite — The subscriber in DS-lite can be identified by the CPE’s IPv6 address (B4 element) or an IPv6 prefix. The selection of address or prefix as the representation of a DS-Lite subscriber is configuration dependent.

- NAT64 — The subscriber is an IPv6 prefix.

Non-deterministic NAT — A mode of operation where all outside IP address and port block allocations are made dynamically at the time of subscriber instantiation. Logging in such case is required.

Port block — A collection of ports that is assigned to a subscriber. A deterministic LSN subscriber can have only one deterministic port block that can be extended by multiple dynamic port blocks. Non-deterministic LSN subscriber can be assigned only dynamic port blocks. All port blocks for a LSN subscriber must be allocated from a single outside IP address.

Port range — A collection of ports that can spawn multiple port blocks of the same type. For example, deterministic port range includes all ports that are reserved for deterministic consumption. Similarly dynamic port range is a total collection of ports that can be allocated in the form of dynamic port blocks. Other types of port ranges are well-known ports and static port forwards.

Network Address Translation (NAT) Overview

The 7750 SR supports Network Address (and port) Translation (NAPT) to provide continuity of legacy IPv4 services during the migration to native IPv6. By equipping the multi-service ISA (MS ISA) in an IOM3-XP, the 7750 SR can operate in two different modes, known as:

- Large Scale NAT, and;

- Layer 2-Aware NAT

These two modes both perform source address and port translation as commonly deployed for shared Internet access. The 7750 SR with NAT is used to provide consumer broadband or business Internet customers access to IPv4 Internet resources with a shared pool of IPv4 addresses, such as may occur around the forecast IPv4 exhaustion. During this time it, is expected that native IPv6 services will still be growing and a significant amount of Internet content will remain IPv4.

Principles of NAT

Network Address Translation devices modify the IP headers of packets between a host and server, changing some or all of the source address, destination address, source port (TCP/UDP), destination port (TCP/UDP), or ICMP query ID (for ping). The 7750 SR in both NAT modes performs Source Network Address and Port Translation (S-NAPT). S-NAPT devices are commonly deployed in residential gateways and enterprise firewalls to allow multiple hosts to share one or more public IPv4 addresses to access the Internet. The common terms of inside and outside in the context of NAT refer to devices inside the NAT (that is behind or masqueraded by the NAT) and outside the NAT, on the public Internet.

TCP/UDP connections use ports for multiplexing, with 65536 ports available for every IP address. Whenever many hosts are trying to share a single public IP address there is a chance of port collision where two different hosts may use the same source port for a connection. The resultant collision is avoided in S-NAPT devices by translating the source port and tracking this in a stateful manner. All S-NAPT devices are stateful in nature and must monitor connection establishment and traffic to maintain translation mappings. The 7750 SR NAT implementation does not use the well-known port range (1..1023).

In most circumstances, S-NAPT requires the inside host to establish a connection to the public Internet host or server before a mapping and translation will occur. With the initial outbound IP packet, the S-NAPT knows the inside IP, inside port, remote IP, remote port and protocol. With this information the S-NAPT device can select an IP and port combination (referred to as outside IP and outside port) from its pool of addresses and create a unique mapping for this flow of data.

Any traffic returned from the server will use the outside IP and outside port in the destination IP/port fields – matching the unique NAT mapping. The mapping then provides the inside IP and inside port for translation.

The requirement to create a mapping with inside port and IP, outside port and IP and protocol will generally prevent new connections to be established from the outside to the inside as may occur when an inside host wishes to be a server.

Application Compatibility

Applications which operate as servers (such as HTTP, SMTP, etc) or peer-to-peer applications can have difficulty when operating behind an S-NAPT because traffic from the Internet can reach the NAT without a mapping in place.

Different methods can be employed to overcome this, including:

- Port Forwarding;

- STUN support; and,

- Application Layer Gateways (ALG)

The 7750 SR supports all three methods following the best-practice RFC for TCP (RFC 5382, NAT Behavioral Requirements for TCP) and UDP (RFC 4787, Network Address Translation (NAT) Behavioral Requirements for Unicast UDP). Port Forwarding is supported on the 7750 SR to allow servers which operate on well-known ports <1024 (such as HTTP and SMTP) to request the appropriate outside port for permanent allocation.

STUN is facilitated by the support of Endpoint-Independent Filtering and Endpoint-Independent Mapping (RFC 4787) in the NAT device, allowing STUN-capable applications to detect the NAT and allow inbound P2P connections for that specific application. Many new SIP clients and IM chat applications are STUN capable.

Application Layer Gateways (ALG) allows the NAT to monitor the application running over TCP or UDP and make appropriate changes in the NAT translations to suit. The 7750 SR has an FTP ALG enabled following the recommendation of the IETF BEHAVE RFC for NAT (RFC 5382).

Even with these three mechanisms some applications will still experience difficulty operating behind a NAT. As an industry-wide issue, forums like UPnP the IETF, operator and vendor communities are seeking technical alternatives for application developers to traverse NAT (including STUN support). In many cases the alternative of an IPv6-capable application will give better long-term support without the cost or complexity associated with NAT.

Large Scale NAT

Large Scale NAT represents the most common deployment of S-NAPT in carrier networks today, it is already employed by mobile operators around the world for handset access to the Internet.

A Large Scale NAT is typically deployed in a central network location with two interfaces, the inside towards the customers, and the outside towards the Internet. A Large Scale NAT functions as an IP router and is located between two routed network segments (the ISP network and the Internet).

Traffic can be sent to the Large Scale NAT function on the 7750 SR using IP filters (ACL) applied to SAPs or by installing static routes with a next-hop of the NAT application. These two methods allow for increased flexibility in deploying the Large Scale NAT, especially those environments where IP MPLS VPN are being used in which case the NAT function can be deployed on a single PE and perform NAT for any number of other PE by simply exporting the default route.

The 7750 SR NAT implementation supports NAT in the base routing instance and VPRN, and through NAT traffic may originate in one VPRN (the inside) and leave through another VPRN or the base routing instance (the outside). This technique can be employed to provide customer’s of IP MPLS VPN with Internet access by introducing a default static route in the customer VPRN, and NATing it into the Internet routing instance.

As Large Scale NAT is deployed between two routed segments, the IP addresses allocated to hosts on the inside must be unique to each host within the VPRN. While RFC1918 private addresses have typically been used for this in enterprise or mobile environments, challenges can occur in fixed residential environments where a subscriber has existing S-NAPT in their residential gateway. In these cases the RFC 1918 private address in the home network may conflict with the address space assigned to the residential gateway WAN interface. Some of these issues are documented in draft-shirasaki-nat444-isp-shared-addr-02. Should a conflict occur, many residential gateways will fail to forward IP traffic.

Port Range Blocks

The S-NAPT service on the 7750 SR BNG incorporates a port range block feature to address scalability of a NAT mapping solution. With a single BNG capable of hundreds of thousands of NAT mappings every second, logging each mapping as it is created and destroyed logs for later retrieval (as may be required by law enforcement) could quickly overwhelm the fastest of databases and messaging protocols. Port range blocks address the issue of logging and customer location functions by allocating a block of contiguous outside ports to a single subscriber. Rather than log each NAT mapping, a single log entry is created when the first mapping is created for a subscriber and a final log entry when the last mapping is destroyed. This can reduce the number of log entries by 5000x or more. An added benefit is that as the range is allocated on the first mapping, external applications or customer location functions may be populated with this data to make real-time subscriber identification, rather than having to query the NAT as to the subscriber identity in real-time and possibly delay applications.

Port range blocks are configurable as part of outside pool configuration, allowing the operator to specify the number of ports allocated to each subscriber when a mapping is created. Once a range is allocated to the subscriber, these ports are used for all outbound dynamic mappings and are assigned in a random manner to minimise the predictability of port allocations (draft-ietf-tsvwg-port-randomization-05).

Port range blocks also serve another useful function in a Large Scale NAT environment, and that is to manage the fair allocation of the shared IP resources among different subscribers.

When a subscriber exhausts all ports in their block, further mappings will be prohibited. As with any enforcement system, some exceptions are allowed and the NAT application can be configured for reserved ports to allow high-priority applications access to outside port resources while exhausted by low priority applications.

Reserved Ports and Priority Sessions

Reserved ports allows an operator to configure a small number of ports to be reserved for designated applications should a port range block be exhausted. Such a scenario may occur when a subscriber is unwittingly subjected to a virus or engaged in extreme cases of P2P file transfers. In these situations, rather than block all new mappings indiscriminately the 7750 SR NAT application allows operators to nominate a number of reserved ports and then assign a 7750 SR forwarding class as containing high priority traffic for the NAT application. Whenever traffic reaches the NAT application which matches a priority session forwarding class, reserved ports will be consumed to improve the chances of success. Priority sessions could be used by the operator for services such as DNS, web portal, e-mail, VoIP, etc to permit these applications even when a subscriber exhausted their ports.

Preventing Port Block Starvation

Dynamic Port Block Starvation in LSN

The outside IP address is always shared for the subscriber with a port forward (static or via PCP) and the dynamically allocated port block, insofar as the port from the port forward is in the range >1023. This behavior can lead to starvation of dynamic port blocks for the subscriber. An example for this scenario is shown in Figure 50.

- A static port forward for the WEB server in Home 1 is allocated in the CPE and the CGN. At the time of static port forward creation, no other dynamic port blocks for Home 1 exist (PCs are powered off).

- Assume that the outside IP address for the newly created static port forward in the CGN is 3.3.3.1.

- Over time dynamic port blocks are allocated for a number of other homes that share the same outside IP address, 3.3.3.1. Eventually those dynamic port block allocations will exhaust all dynamic port block range for the address 3.3.3.1.

- Once the dynamic port blocks are exhausted for outside IP address 3.3.3.1, a new outside IP address (for example, 3.3.3.2) will be allocated for additional homes.

Eventually the PCs in Home 1 come to life and they try to connect to the Internet. Due to the dynamic port block exhaustion for the IP address 3.3.3.1 (that is mandated by static port forward – Web Server), the dynamic port block allocation will fail and consequently the PCs will not be able to access the Internet. There will be no additional attempt within CGN to allocate another outside IP address. In the CGN there is no distinction between the PCs in Home 1 and the Web Server when it comes to source IP address. They both share the same source IP address 2.2.2.1 on the CPE.

- The solution for this is to reserve a port block (or blocks) during the static port forward creation for the given subscriber.

Figure 50: Dynamic Port Block Starvation in LSN

Dynamic Port Block Reservation

To prevent starvation of dynamic port blocks for the subscribers that utilize port forwards, a dynamic port block (or blocks) will be reserved during the lifetime of the port forward. Those reserved dynamic port blocks will be associated with the same subscriber that created the port forward. However, a log would not be generated until the dynamic port block is actually used and mapping within that block are created.

At the time of the port forward creation, the dynamic port block will be reserved in the following fashion:

- If the dynamic port block for the subscriber does not exist, then a dynamic port block for the subscriber will be reserved. No log for the reserved dynamic port block is generated until the dynamic port block starts being utilized (mapping created due to the traffic flow).

- If the corresponding dynamic port block already exists, then it will be reserved even after the last mapping within the last port block had expired.

The reserved dynamic port block (even without any mapping) will continue to be associated with the subscriber as long as the port forward for the subscriber is present. The log (syslog or RADIUS) will be generated only when there is not active mapping within the dynamic port block AND all port forwards for the subscriber are deleted.

Additional considerations with dynamic port block reservation:

- The port block reservation should be triggered only by the first port forward for the subscriber. The subsequent port forwards will not trigger additional dynamic port block reservation.

- Only a single dynamic port block for the subscriber is reserved (i.e no multiple port-block reservations for the subscriber are possible).

- This feature is enabled with the configuration command port-forwarding-dyn-block-reservation under the configure>service>vprn>nat>outside>pool and the configure>router>nat>outside>pool CLI hierarchy. This command can be enabled only if the maximum number of configured port blocks per outside IP is greater or equal then the maximum configured number of subscribers per outside IP address. This will guarantee that all subscribers (up the maximum number per outside IP address) configured with port forwards will be able to reserve a dynamic port block.

- In case that the port-reservation is enabled while the outside pool is operational and subscribers traffic is already present, the following two cases will have to be considered:

- The configured number of subscribers per outside IP is less or equal than the configured number of port blocks per outside IP address (this is permitted) but all dynamic port blocks per outside IP address are occupied at the moment when port reservation is enabled. This will leave existing subscribers with port forwards that do not have any dynamic port blocks allocated (orphaned subscribers), unable to reserve dynamic port blocks. In this case the orphaned subscribers will have to wait until dynamic port blocks allocated to the subscribers without port forwards are freed.

- The configured number of subscribers per outside IP is greater than the configured number of port blocks per outside IP address. In addition, all dynamic port blocks per outside IP address are allocated. Before the port reservation is even enabled, the subscriber-limit per outside IP address will have to be lowered (by configuration) so that it is equal or less than the configured number of port blocks per outside IP address. This action will cause random deletion of subscribers that do not have any port forwards. Such subscribers will be deleted until the number of subscriber falls below the newly configured subscriber limit. Subscribers with static port forwards will not be deleted, regardless of the configured subscriber-limit number. Once the number of subscriber is within the newly configured subscriber-limit, the port-reservation can take place under the condition that the dynamic port blocks are available. If certain subscribers with pot forwards have more than one dynamic port block allocated, the orphaned subscribers will have to wait for those additional dynamic port blocks to expire and consequently be released.

- This feature is supported on the following applications: CGN, DS-Lite and NAT64.

Timeouts

Creating a NAT mapping is only one half of the problem – removing a NAT mapping at the appropriate time maximizes the shared port resource. Having ports mapped when an application is no longer active reduces solution scale and may impact the customer experience should they exhaust their port range block. The NAT application provides timeout configuration for TCP, UDP and ICMP.

TCP state is tracked for all TCP connections, supporting both three-way handshake and simultaneous TCP SYN connections. Separate and configurable timeouts exist for TCP SYN, TCP transition (between SYN and Open), established and time-wait state. Time-wait assassination is supported and enabled by default to quickly remove TCP mappings in the TIME WAIT state.

UDP does not have the concept of connection state and is subject to a simple inactivity timer. Company-sponsored research into applications and NAT behavior suggested some applications, like the Bittorrent Distributed Hash Protocol (DHT) can make a large number of outbound UDP connections that are unsuccessful. Rather than wait the default five (5) minutes to time these out, the 7750 SR NAT application supports an udp-initial timeout which defaults to 15 seconds. When the first outbound UDP packet is sent, the 15 second time starts – it is only after subsequent packets (inbound or outbound) that the default UDP timer will become active, greatly reducing the number of UDP mappings.

Watermarks

It is possible to define watermarks to monitor the actual usage of sessions and/or ports.

For each watermark, a high and a low value has to be set. Once the high value is reached, a notification will be send. As soon as the usage drops below the low watermark, another notification will be send.

Watermarks can be defined on nat-group, pool and policy level.

- Nat-group: Watermarks can be placed to monitor the total number of sessions on an MDA.

- Pool: Watermarks can be placed to monitor the total number of blocks in use in a pool.

- Policy: In the policy it is possible to define watermarks on session and port usage. In both cases, it is the usage per subscriber (for l2-aware nat) or per host (for large-scale nat) that will be monitored.

L2-Aware NAT

Figure 51: L2-Aware Tree

NAT is supported on DHCP, PPPoE and L2TP, there is not support for static and ARP hosts.

In an effort to address issues of conflicting address space raised in draft-shirasaki-nat444-isp-shared-addr-02, an enhancement to Large Scale NAT was co-developed to give every broadband subscriber their own NAT mapping table, yet still share a common outside pool of IPs.

Layer-2 Aware (or subscriber aware) NAT is combined with Enhanced Subscriber Management on the 7750 SR BNG to overcome the issues of colliding address space between home networks and the inside routed network between the customer and Large Scale NAT.

Layer-2 Aware NAT permits every broadband subscriber to be allocated the exact same IPv4 address on their residential gateway WAN link and then proceeds to translate this into a public IP through the NAT application. In doing so, L2-Aware NAT avoids the issues of colliding address space raised in draft-shirasaki without any change to the customer gateway or CPE.

Layer-2-Aware NAT is supported on any of the ESM access technologies, including PPPoE, IPoE (DHCP) and L2TP LNS. For IPoE both n:1 (VLAN per service) and 1:1 (VLAN per subscriber) models are supported. A subscriber device operating with L2-Aware NAT needs no modification or enhancement – existing address mechanisms (DHCP or PPP/IPCP) are identical to a public IP service, the 7750 SR BNG simply translates all IPv4 traffic into a pool of IPv4 addresses, allowing many L2-Aware NAT subscribers to share the same IPv4 address.

More information on L2-Aware NAT can be found in draft-miles-behave-l2nat-00.

One-to-One (1:1) NAT

In 1:1 NAT, each source IP address is translated in 1:1 fashion to a corresponding outside IP address. However, the source ports are passed transparently without translation.

The mapping between the inside IP addresses and outside IP addresses in 1:1 NAT supports two modes:

- Dynamic - the operator can specify the outside IP addresses in the pool, but the exact mapping between the inside IP address and the configured outside IP addresses is performed dynamically by the system in a semi-random fashion.

- Static – the mappings between IP addresses are configurable and they can be explicitly set.

Dynamic version of 1:1 NAT is protocol dependent. Only TCP/UDP/ICMP protocols are allowed to traverse such NAT. All other protocols are discarded, with the exception of PPTP with ALG. In this case, only GRE traffic associated with PPTP is allowed through dynamic 1:1 NAT.

Static version of 1:1 NAT is protocol agnostic. This means that all IP based protocols are allowed to traverse static 1:1 NAT.

The following is applicable to 1:1 NAT:

- Even though source ports are not being translated, the state maintenance for TCP and UDP traffic is still performed.

- Traffic can be initiated from outside towards any statically mapped IPv4 address.

- 1:1 NAT can be supported simultaneously with NAPT (classic non 1:1 NAT) within the same inside routing context. This is accomplished by configuring two separate NAT pools, one for 1:1 NAT and the other for non 1:1 NAPT.

Static 1:1 NAT

In static 1:1 NAT, inside IP addresses are statically mapped to the outside IP addresses. In this fashion, devices on the outside can predictably initiate traffic to the devices on the inside.

Static configuration is based on the CLI concepts used in deterministic NAT. For example:

Static mappings are configured according to the map statements. The map statement can be configured manually by the operator or automatically by the system. IP addresses from the automatically generated map statements are sequentially mapped into available outside IP address in the pool:

- The first inside IP address will be mapped to the 1st available outside IP address from the pool

- The second inside IP address will be mapped to the 2nd available outside IP address from the pool

- Etc.

The following mappings apply to the example from above:

Protocol Agnostic Behavior

Although static 1:1 NAT is protocol agnostic, the state maintenance for TCP and UDP traffic is still required in order to support ALGs. Because of that, the existing scaling limits related to the number of supported flows still apply.

Protocol agnostic behavior in 1:1 NAT is a property of a NAT pool:

The application agnostic command is a pool create-time parameter. This command will automatically pre-set the following pool parameters:

Once pre-set, these parameters cannot be changed while pool is operating in protocol agnostic mode.

The deterministic port-reservation 65536 command configures the pool to operate in static (or deterministic) mode.

Modification of Parameters in Static 1:1 NAT

Parameters in static 1:1 NAT can be changed according to the following rules:

- Deterministic pool must be in a no shutdown state any time when a prefix or a map command in deterministic NAT is added or removed.

- All configured prefixes referencing the pool via the nat-policy must be deleted (un-configured) before the pool can be shut down.

- Map statements can be modified only when prefix is shutdown state. All existing map statements must be removed before the new ones are created.

Load Distribution over ISAs in Static 1:1 NAT

For best traffic distribution over ISAs, the value of the classic-lsn-max-subscriber-limit parameter should be set to 1.

This mean that traffic is load balanced over ISAs based on inside IP addresses. In static 1:1 NAT this is certainly possible since the subscriber-limit parameter at the pool level is preset to a fixed value of 1.

However, if 1:1 static NAT is simultaneously used with regular (many-to-one) deterministic NAT where the subscriber-limit parameter can be set to a value greater than 1, then the classic-lsn-max-subscriber-limit parameter will also have to be set to a value that is greater than 1. The consequence of this is that the traffic will be load balanced based on the consecutive blocks of IP addresses (subnets) rather than individual IP addresses. Further information on this topic is provided in sections describing Deterministic NAT behavior.

NAT-Policy Selection

Traffic match criteria used in selection of specific nat-policy in static 1:1 NAT (deterministic part of the configuration) must not overlap with traffic match criteria that is used in selection of specific nat-policy used in filters or in destination-prefix statement (these are used for traffic diversion to NAT). Otherwise, traffic will be dropped in ISA.

A specific nat-policy in this context refers to a non-default nat-policy, or a nat-policy that is directly referenced in a filter, in a destination-prefix command or in a deterministic prefix command.

The following example is used to clarify this point further:

Traffic is diverted to nat using specific nat-policy pol-2:

Deterministic (source) prefix 10.10.10.0/30 is configured to be mapped to specific nat-policy pol-1 that point to protocol agnostic 1:1 nat pool.

Packet received in the ISA has srcIP 10.10.10.1 and destIP 192.168.10.10.

In case that no NAT mapping for this traffic exists in the ISA, a nat-policy (and with this the NAT pool) needs to be determined in order to create the mapping. Traffic is diverted to NAT using nat-policy pol-2, while the deterministic mapping says that nat-policy pol-1 should be used (and thus a different pool from the one referenced in nat-policy pol-2). Due to the specific nat-policy conflict, traffic will be dropped in the ISA.

In order to successfully pass traffic between two subnets through NAT while simultaneously using static 1:1 NAT and regular LSN44, a default (non-specific) nat-policy can be used for regular LSN44.

For example:

In this case, the four hosts from the prefix 10.10.10.0/30 will be mapped in 1:1 fashion to 4 IP addresses from the pool referenced in the specific nat-policy pol-1, while all other hosts from the 10.10.10.0/24 network will be mapped to the NAPT pool referenced by the default nat-policy pol-2. In this fashion, nat-policy conflict is avoided.

In summary, specific nat-policy (in filter, destination-prefix command or in deterministic prefix command) will always take precedence over default nat-policy. However, traffic that matches classification criteria (in filter, destination-prefix command or a deterministic prefix command) that leads to multiple specific nat-policies, will be dropped.

Mapping Timeout

Static 1:1 NAT mappings are explicitly configured, and therefore their lifetime is tied to the configuration.

Logging

The logging mechanism for static mapping is the same as in Deterministic NAT - configuration changes are logged via syslog enhanced with reverse querying on the system.

Restrictions

Static 1:1 NAT is supported only for LSN44 (no support for DS-Lite/NAT64 or L2-aware NAT).

ICMP

In 1:1 NAT, certain ICMP messages contain an additional IP header that is embedded in the ICMP header. For example, when the ICMP message is sent to the source due to the inability to deliver datagram to its destination, the ICMP generating node will include the original IP header of the packet + 64bits of the original datagram. This information will help the source node to match the ICMP message to the process associated with this message in the first place.

When such message are received in the downstream direction (on the outside), 1:1 NAT will recognize them and change the destination IP address not only in the outside header but also in the ICMP header. In other words, a lookup in the downstream direction will be performed in the ISA to determine if the packet is ICMP with specific type. Depending on the outcome, the destination IP address in the ICMP header will be changed (reverted to the original source IP address).

Messages which carry original IP header within ICMP header are:

- Destination Unreachable Messages (Type 3)

- Time Exceeded Message (Type 11)

- Parameter Problem Message (Type 12)

- Source Quench Message (Type 4)

Deterministic NAT

Overview

In deterministic NAT the subscriber is deterministically mapped into an outside IP address and a port block. The algorithm that performs this deterministic mapping is revertive, which means that a NAT subscriber can be uniformly derived from the outside IP address and the outside port (and the routing instance). Thus, logging in deterministic NAT is not needed.

The deterministic [subscriber <-> outside-ip, deterministic-port-block] mapping can be automatically extended by a dynamic port-block in case that deterministic port block becomes exhausted of ports. By extending the original deterministic port block of the NAT subscriber by a dynamic port block yields a satisfactory compromise between a deterministic NAT and a non-deterministic NAT. There will be no logging as long as the translations are in the domain of the deterministic NAT. Once the dynamic port block is allocated for port extension, logging will be automatically activated.

NAT subscribers in deterministic NAT are not assigned outside IP address and deterministic port-block on a first come first serve basis. Instead, deterministic mappings will be pre-created at the time of configuration regardless of whether the NAT subscriber is active or not. In other words we can say that overbooking of the outside address pool is not supported in deterministic NAT. Consequently, all configured deterministic subscribers (for example, inside IP addresses in LSN44 or IPv6 address/prefix in DS-Lite) will be guaranteed access to NAT resources.

Supported Deterministic NAT Flavors

The routers support Deterministic LSN44 and Deterministic DS-Lite. The basic deterministic NAT principle is applied equally to both NAT flavors. The difference between the two stem from the difference in interpretation of the subscriber – in LSN44 a subscriber is an IPv4 address, whereas in DS-Lite the subscriber is an IPv6 address or prefix (configuration dependent).

With the exception of classic-lsn-max-subscriber-limit and dslite-max-subscriber-limit commands in the inside routing context, the deterministic NAT configuration blocks are for the most part common to LSN44 and DS-Lite.

Deterministic DS-Lite section at the end of this section will focus on the features specific to DS-Lite.

Number of Subscribers per Outside IP and per Pool

The outside pools in deterministic NAT can contain an arbitrary number of address ranges, where each address range can contain an arbitrary number of IP addresses (up to the ISA maximum).

The maximum number of NAT subscribers that can be mapped to a single outside IP address is configurable using a subscriber-limit command under the pool hierarchy. For Deterministic NAT, this number is restricted to the power of 2 (2^n). The consequence of this is that the number of NAT subscribers must be configuration-wise organized in ranges with the boundary that must be power of 2.

For example, in LSN44 where the NAT subscriber is an IP address, the deterministic subscribers would be configured with prefixes (for example, 10.10.10.0/24 – 256 subscribers) rather than an IP address range that would contain an arbitrary number of addresses (e.g. 10.10.10.10 – 10.10.10.50).

On the other hand, in DS-Lite the deterministic subscribers are for the most part already determined by the prefix with the subscriber-prefix-length command under the DS-Lite configuration node.

The number of subscribers per outside IP (the subscriber-limit command [2^n]) multiplied by the number of IP addresses over all address-range in an outside pool will determine the maximum number of subscribers that a deterministic pool can support.

Referencing a Pool

In deterministic NAT, the outside pool can be shared amongst subscribers from multiple routing instances. Also, NAT subscribers from a single routing instance can be selectively mapped to different outside pools.

Outside Pool Configuration

The number of deterministic mappings that a single outside IP address can sustain is determined through the configuration of the outside pool.

The port allocation per an outside IP is shown in Figure 52.

Figure 52: Outside Pool Configuration

The well-known ports are predetermined and are in the range 0 — 1023.

The upper limit of the port range for static port forwards (wildcard range) is determined by the existing port-forwarding-range command.

The range of ports allocated for deterministic mappings (DetP) is determined by multiplying the number of subscribers per outside IP (subscriber-limit command) with the number of ports per deterministic block (determinisitic>port-reservation command). The number of subscribers per outside IP in deterministic NAT must be power of 2 (2^n).

The remaining ports, extending from the end of the deterministic port range to the end of the total port range (65,535) are used for dynamic port allocation. The size of each dynamic port block is determined with the existing port-reservation command.

The determinisitic>port-reservation command enables deterministic mode of operation for the pool.

Examples:

The follow show three examples with deterministic Large Scale NAT44 where the requirements are:

- 300, 500 or 700 (three separate examples) ports in each deterministic port block.

- A subscriber (an inside IPv4 address in LSN44) can extend its deterministic ports by a minimum of one dynamic port-block and by a maximum of four dynamic port blocks.

- Each dynamic port-block contains 100 ports.

- Oversubscription of dynamic port blocks is 4:1. This means that 1/4th of inside IP addresses may be starved out of dynamic port blocks in worst case scenario.

- The wildcard (static) port range is 3000 ports.

In the first case, the ideal case will be examined where an arbitrary number of subscribers per outside IP address is allocated according to our requirements outlined above. Then the limitation of the number of subscribers being power of 2 will be factored in.

Table 28: Contiguous Number of Subscribers

Well-Known Ports* | Static Port Range* | Number of Ports in Deterministic Block* | Number of Deterministic Blocks | Number of Ports in Dynamic Block* | Number of Dynamic Blocks | Number of Inside IP Addresses per Outside IP Address* | Block Limit per Inside IP Address* | Wasted Ports |

0-1023 | 1024-4023 | 300 | 153 | 100 | 153 | 153 | 5 | 312 |

0-1023 | 1024-4023 | 500 | 102 | 100 | 102 | 102 | 5 | 312 |

0-1023 | 1024-4023 | 700 | 76 | 100 | 76 | 76 | 5 | 712 |

The example in Table 28 shows how port ranges would be carved out in ideal scenario.

* — Signifies the fixed parameters (requirements).

The other values are calculated according to the fixed requirements.

port-block-limit includes the deterministic port block plus all dynamic port-blocks.

Next, a more realistic example with the number of subscribers being equal to 2^n are considered. The ratio between the deterministic ports and the dynamic ports per port-block just like in the example above: 3/1, 5/1 and 7/1 are preserved. In this case, the number of ports per port-block is dictated by the number of subscribers per outside IP address.

Table 29: Preserving Det/Dyn Port Ratio with 2^n Subscribers

Well-Known Ports* | Static Port Range* | Number of Ports in Deterministic Block* | Number of Deterministic Blocks | Number of Ports in Dynamic Block* | Number of Dynamic Blocks | Number of Inside IP Addresses per Outside IP Address* | Block Limit per Inside IP Address* | Wasted Ports |

0-1023 | 1024-4023 | 180 | 256 | 60 | 256 | 256 | 5 | 72 |

0-1023 | 1024-4023 | 400 | 128 | 80 | 128 | 128 | 5 | 72 |

0-1023 | 1024-4023 | 840 | 64 | 120 | 64 | 64 | 5 | 72 |

* — Signifies the fixed parameters (requirements).

The final example is similar as Table 28 with the difference that the number of deterministic port blocks fixed are kept, as in the original example (300, 500 and 700).

Table 30: Fixed Number of Deterministic Ports with 2^n Subscribers

Well-Known Ports | Static Port Range | Number of Ports in Deterministic Block | Number of Deterministic Blocks | Number of Ports in Dynamic Block | Number of Dynamic Blocks | Number of Inside IP Addresses per Outside IP Address | Block Limit per Inside IP Address | Wasted Ports |

0-1023 | 1024-4023 | 300 | 128 | 180 | 128 | 128 | 5 | 72 |

0-1023 | 1024-4023 | 500 | 64 | 461 | 64 | 64 | 5 | 8 |

0-1023 | 1024-4023 | 700 | 64 | 261 | 64 | 64 | 5 | 8 |

The three examples from above should give us a perspective on the size of deterministic and dynamic port blocks in relation to the number of subscribers (2^n) per outside IP address. Operators should run a similar dimensioning exercise before they start configuring their deterministic NAT.

The CLI for the highlighted case in the Table 28 is displayed:

Where:

128 subs * 300ports = 38,400 deterministic port range

128 subs * 180ports = 23,040 dynamic port range

Det+dyn available ports = 65,536 – 4024 = 61,512

Det+dyn usable pots = 128*300 + 128 *180 = 61,440 ports

72 ports per outside-ip are wasted.

This configuration will allow 128 subscribers (inside IP addresses in LSN44) for each outside address (compression ratio is 128:1) with each subscriber being assigned up to 1020 ports (300 deterministic and 720 dynamic ports over 4 dynamic port blocks).

The outside IP addresses in the pool and their corresponding port ranges are organized as shown in Figure 53.

Figure 53: Outside Address Ranges

Assuming that the above graph depicts an outside deterministic pool, the number of subscribers that can be accommodated by this deterministic pool is represented by purple squares (number of IP addresses in an outside pool * subscriber-limit). The number of subscribers across all configured prefixes on the inside that are mapped to the same deterministic pool must be less than the outside pool can accommodate. In other words, an outside address pool in deterministic NAT cannot be oversubscribed.

The following is a CLI representation of a deterministic pool definition including the outside IP ranges:

Mapping Rules and the map Command in Deterministic LSN44

The common building block on the inside in the deterministic LSN44 configuration is a IPv4 prefix. The NAT subscribers (inside IPv4 addresses) from the configured prefix will be deterministically mapped to the outside IP addresses and corresponding deterministic port-blocks. Any inside prefix in any routing instance can be mapped to any pool in any routing instance (including the one in which the inside prefix is defined).

The mapping between the inside prefix and the deterministic pool is achieved through a nat-policy that can be referenced per each individual inside IPv4 prefix. IPv4 addresses from the prefixes on the inside will be distributed over the IP addresses defined in the outside pool referenced by the nat-policy.

The mapping itself is represented by the map command under the prefix hierarchy:

The purpose of the map statement is to split the number of subscribers within the configured prefix over available sequences of outside IP addresses. The key parameter that governs mappings between the inside IPv4 addresses and outside IPv4 addresses in deterministic LSN44 is defined by the outside>pool>subscriber-limit command. This parameter must be power of 2 and it limits the maximum number of NAT subscribers that can be mapped to the same outside IP address.

The follow are rules governing the configuration of the map statement:

- If the number of subscribers per configured prefix is greater than the subscriber-limit per outside IP parameter (2^n), then the lowest n bits of the map start inside-ip-address must be set to 0.

- If the number of subscribers per configured prefix is equal or less than the subscriber-limit per outside IP parameter (2^n), then only one map command for this prefix is allowed. In this case there is no restriction on the lower n bits of the map start inside-ip-address. The range of the inside IP addresses in such map statement represents the prefix itself.

- The outside-ip-address in the map statements must be unique amongst all map statements referencing the same pool. In other words, two map statements cannot reference the same outside-ip-address in the pool.

In case that the number of subscribers (IP addresses in LSN44) in the map statement is larger than the subscriber-limit per outside IP, then the subscribers must be split over a block of consecutive outside IP addresses where the outside-ip-address in the map statement represent only the first outside IP address in that block.

The number of subscribers (range of inside IP addresses in LSN44) in the map statement does not have to be a power of 2. Rather it has to be a multiple of a power of two m * 2^n, where m is the number of consecutive outside IP addresses to which the subscribers are mapped and the 2^n is the subscriber-limit per outside IP.

An example of the map statement is given below:

In this case, the configured 10.0.0.0/24 prefix is represented by the range of IP addresses in the map statement (10.0.0.0-10.0.0.255). Since the range of 256 IP addresses in the map statement cannot be mapped into a single outside IP address (subscriber-limit=128), this range must be further implicitly split within the system and mapped into multiple outside IP addresses. The implicit split will create two IP address ranges, each with 128 IP addresses (10.0.0.0/25 and 10.0.0.128/25) so that addresses from each IP range are mapped to one outside IP address. The hosts from the range 10.0.0.0-10.0.0.127 will be mapped to the first IP address in the pool (128.251.0.1) as explicitly stated in the map statement (to statement). The hosts from the second range, 10.0.0.128-10.0.0.255 will be implicitly mapped to the next consecutive IP address (128.251.0.2).

Alternatively, the map statement can be configured as:

In this case the IP address range in the map statement is split into two non-consecutive outside IP addresses. This gives the operator more freedom in configuring the mappings.

However, the following configuration is not supported:

Considering that the subscriber-limit = 128 (2^n; where n=7), the lower n bits of the start address in the second map statement (map start 10.0.0.64 end 10.0.0.127 to 128.251.0.3) are not 0. This is in violation of the rule #1 that governs the provisioning of the map statement.

Assuming that we use the same pool with 128 subscribers per outside IP address, the following scenario is also not supported (configured prefix in this example is different than in previous example):

Although the lower n bits in both map statements are 0, both statements are referencing the same outside IP (128.251.0.1). This is violating rule #2 that governs the provisioning of the map statement. Each of the prefixes in this case will have to be mapped to a different outside IP address, which will lead to underutilization of outside IP addresses (half of the deterministic port-blocks in each of the two outside IP addresses will be not be utilized).

In conclusion, considering that the number of subscribers per outside IP (subscriber-limit) must be 2^n, the inside IP addresses from the configured prefix will be split on the 2^n boundary so that every deterministic port-block of an outside IP is utilized. In case that the originally configured prefix contains less subscribers (IP addresses in LSN44) than an outside IP address can accommodate (2^n), all subscribers from such configured prefix will be mapped to a single outside IP. Since the outside IP cannot be shared with NAT subscribers from other prefixes, some of the deterministic port-blocks for this particular outside IP address will not be utilized.

Each configured prefix can evaluate into multiple map commands. The number of map commands will depend on the length of the configured prefix, the subscriber-limit command and fragmentation of outside address-range within the pool with which the prefix is associated.

Hashing Considerations in Deterministic LSN44

Support for multiple MS-ISAs in the nat-group calls for traffic hashing on the inside in the ingress direction. This will ensure fair load balancing of the traffic amongst multiple MS-ISAs. While hashing in non-deterministic LSN44 can be performed per source IP address, hashing in deterministic LSN44 is based on subnets instead of individual IP addresses. The length of the hashing subnet is common for all configured prefixes within an inside routing instance. In case that a prefixes from an inside routing instances is referencing multiple pools, the common hashing prefix length will be chosen according to the pool with the highest number of subscribers per outside IP address. This will ensure that subscribers mapped to the same outside IP address will be always hashed to the same MS-ISA.

In general, load distribution based on hashing is dependent on the sample. Large and more diverse sample will ensure better load balancing. Therefore the efficiency of load distribution between the MS-ISAs is dependent on the number and diversity of subnets that hashing algorithm is taking into consideration within the inside routing context.

A simple rule for good load balancing is to configure a large number of subscribers relative to the largest t subscriber-limit parameter in any given pool that is referenced from this inside routing instance.

Figure 54: Deterministic LSN44 Configuration Example

The configuration example shown Figure 54 depicts a case in which prefixes from multiple routing instances are mapped to the same outside pool and at the same time the prefixes from a single inside routing instance are mapped to different pools (we do not support the latter with non-deterministic NAT).

| Note:

In this example is the inside prefix 10.10.10.0/24 that is present in VPRN 1 and VPRN 2. In both VPRNs, this prefix is mapped to the same pool - pool-1 with the subscriber-limit of 64. Four outside IP addresses per prefix per VPRN (eight in total) are allocated to accommodate the mappings for all hosts in prefix 10.10.10.0/24. But the hashing prefix length in VPRN1 is based on the subscriber-limit 64 (VPRN1 references only pool-1) while the hashing prefix length in VPRN2 is based on the subscriber-limit 256 in pool-2 (VPRN2 references both pools, pool-1 and pool-2 and we must select the larger subscriber-limit). The consequence of this is that the traffic from subnet 10.10.10.0/24 in VPRN 1 can be load balanced over 4 MS-ISA (hashing prefix length is 26) while traffic from the subnet 10.10.10.0/24 in VPRN 2 is always sent to the same MS-ISA (hashing prefix length is 24). |

Distribution of Outside IP Addresses Across MS-ISAs in an MS-ISA NA Group

Distribution of outside IP addresses across the MS-ISAs is dependent on the ingress hashing algorithm. Since traffic from the same subscriber is always pre-hashed to the same MS-ISA, the corresponding outside IP address also must reside on the same ISA. CPM runs the hashing algorithm in advance to determine on which MS-ISA the traffic from particular inside subnet will land and then the corresponding outside IP address (according to deterministic NAT mapping algorithm) will be configured in that particular MS-ISA.

Sharing of Deterministic NAT Pools

Sharing of the deterministic pools between LSN44 and DS-Lite is supported.

Simultaneous support of dynamic and deterministic NAT

Simultaneous support for deterministic and non-deterministic NAT inside of the same routing instance is supported. However, an outside pool can be only deterministic (although expandable by dynamic ports blocks) or non-deterministic at any given time.

Ingress hashing for all NATed traffic within the VRF will in this case be performed based on the subnets driven by the classic-lsn-max-subscriber-limit parameter.

Selecting Traffic for NAT

Deterministic NAT does not change the way how traffic is selected for the NAT function but instead only defines a predictable way for translating subscribers into outside IP addresses and port-blocks.

Traffic is still diverted to NAT using the existing methods:

- routing based – traffic is forwarded to the NAT function if it matches a configured destination prefix that is part of the routing table. In this case inside and outside routing context must be separated.

- filter based – traffic is forwarded to the NAT function based on any criteria that can be defined inside an IP filter. In this case the inside and outside routing context can be the same.

Inverse Mappings

The inverse mapping can be performed with a MIB locally on the node or externally via a script sourced in the router. In both cases, the input parameters are <outside routing instance, outside IP, outside port. The output from the mapping is the subscriber and the inside routing context in which the subscriber resides.

MIB approach

Reverse mapping information can be obtained using the following command:

Example:

Output:

Inside router 10 ip 20.0.5.171 -- outside router Base ip 85.0.0.2 port 2333 at Mon Jan 7 10:02:02 PST 2013

Off-line Approach to Obtain Deterministic Mappings

Instead of querying the system directly, there is an option where a Python script can be generated on router and exported to an external node. This Python script contains mapping logic for the configured deterministic NAT in the router. The script can be then queried off-line to obtain mappings in either direction. The external node must have installed Python scripting language with the following modules: getopt, math, os, socket and sys.

The purpose of such off-line approach is to provide fast queries without accessing the router. Exporting the Python script for reverse querying is a manual operation that needs to be repeated every time there is configuration change in deterministic NAT.

The script is exported outside of the box to a remote location (assuming that writing permissions on the external node are correctly set). The remote location is specified with the following command:

The status of the script is shown using the following command:

Once the script location is specified, the script can be exported to that location with the following command:

This needs to be repeated manually every time the configuration affecting deterministic NAT changes.

The script itself can be run to obtain mapping in forward or backward direction:

The following displays an example in which source addresses are mapped in the following manner:

The forward query for this example will be performed as:

user@external-server:/home/ftp/pub/det-nat-script$ ./det-nat.py -f -s 10 -a 20.0.5.10

Output:

The reverse query for this example will be performed as:

Output:

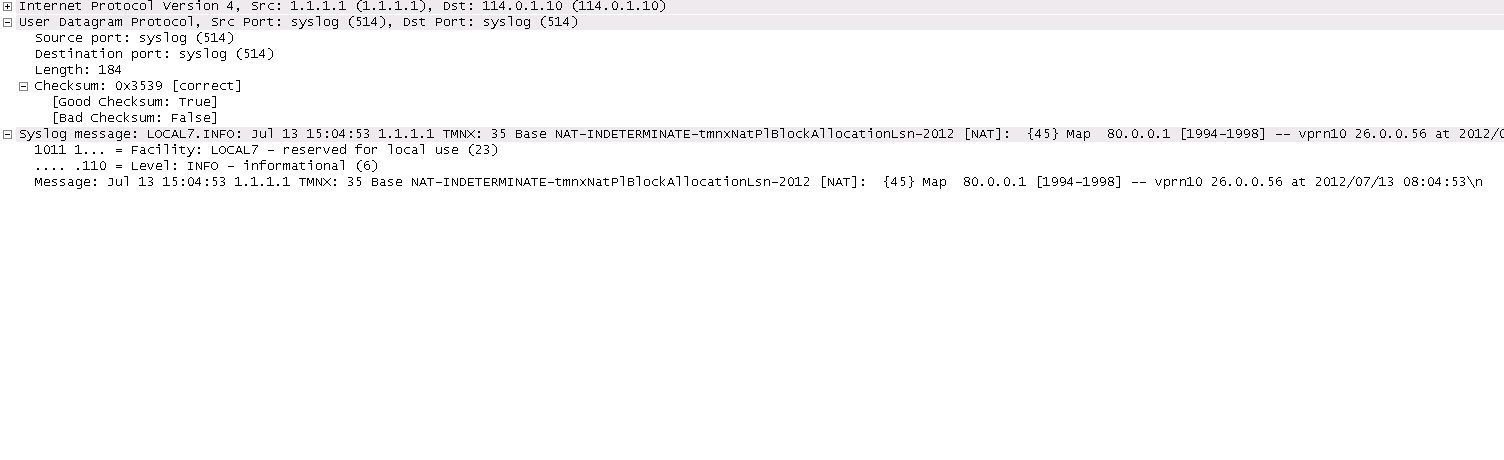

Logging

Every configuration change concerning the deterministic pool will be logged and the script (if configured for export) will be automatically updated (although not exported). This is needed to keep current track of deterministic mappings. In addition, every time a deterministic port-block is extended by a dynamic block, the dynamic block will be logged just as it is today in non-deterministic NAT. The same logic is followed when the dynamic block is de-allocated.

All static port forwards (including PCP) are also logged.

PCP allocates static port forwards from the wildcard-port range.

Deterministic DS-Lite

A subscriber in non-deterministic DS-Lite is defined as v6 prefix, with the prefix length being configured under the DS-Lite NAT node:

All incoming IPv6 traffic with source IPv6 addresses falling under a unique v6 prefix that is configured with subscriber-prefix-length command will be considered as a single subscriber. As a result, all source IPv4 addresses carried within that IPv6 prefix will be mapped to the same outside IPv4 address.

The concept of deterministic DS-Lite is very similar to deterministic LSN44. The DS-lite subscribers (IPv6 addresses/prefixes) are deterministically mapped to outside IPv4 addresses and corresponding deterministic port-blocks.

Although the subscriber in DS-Lite is considered to be either a B4 element (IPv6 address) or the aggregation of B4 elements (IPv6 prefix determined by the subscriber-prefix-length command), only the IPv4 source addresses and ports carried inside of the IPv6 tunnel are actually translated.

The prefix statement for deterministic DS-lite remains under the same deterministic CLI node as for the deterministic LSN44. However, the prefix statement parameters for deterministic DS-Lite differ from the one for deterministic LSN44 in the following fashion:

- DS-Lite prefix will be a v6 prefix (instead of v4). The DS-lite subscriber whose traffic is mapped to a particular outside IPv4 address and the deterministic port block is deduced from the prefix statement and the subscriber-prefix-length statement.

- Subscriber-type is set to dslite-lsn-sub.

Example:

In this case, 16 v6 prefixes (from ABCD:FF::/60 to ABCD:FF:00:F0::/60) are considered DS-Lite subscribers. The source IPv4 addresses/ports inside of the IPv6 tunnels is mapped into respective deterministic port blocks within an outside IPv4 address according to the map statement.

The map statement contains minor modifications as well. It maps DS-Lite subscribers (IPv6 address or prefix) to corresponding outside IPv4 addresses. Continuing on the previous example:

map start ABCD:FF::/60 end ABCD:FF:00:F0::/60 to 128.251.1.1

The prefix length (/60) in this case MUST be the same as configured subscriber-prefix-length. If we assume that the subscriber-limit in the corresponding pool is set to 8 and outside IP address range is 128.251.1.1 - 128.251.1.10, then the actual mapping is the following:

Hashing Considerations in DS-Lite

The ingress hashing and load distribution between the ISAs in Deterministic DS-Lite is governed by the highest number of configured subscribers per outside IP address in any pool referenced within the given inside routing context.

This limit is configured under:

While ingress hashing in non-deterministic DS-Lite is governed by the subscriber-prefix-length command, in deterministic DS-Lite the ingress hashing is governed by the combination of dslite-max-subscriber-limit and subscriber-prefix-length commands. This is to ensure that all DS-Lite subscribers that are mapped to a single outside IP address are always sent to the same MS-ISA (on which that outside IPv4 address resides). In essence, as soon as deterministic DS-Lite is enabled, the ingress hashing is performed on an aggregated set of n = log2(dslite-max-subscriber-limit) contiguous subscribers. n is the number of bits used to represent the largest number of subscribers within an inside routing context, that is mapped to the same outside IP address in any pool referenced from this inside routing context (referenced through the nat-policy).

Once the deterministic DS-lite is enabled (a prefix command under the deterministic CLI node is configured), the ingress hashing influenced by the dslite-max-subscriber-limit will be in effect for both flavors of DS-Lite (deterministic AND non-deterministic) within the inside routing context assuming that both flavors are configured simultaneously.

With introduction of deterministic DS-lite, the configuration of the subscriber-prefix-length must adhere to the following rule:

- The configured value for the subscriber-prefix-length minus the number of bits representing the dslite-max-subscriber-limit value, must be in the range [32..64,128]. Or:

This can be clarified by the two following examples:

- dslite-max-subscriber-limit = 64 — n=6 [log2(64) = 6] .

This means that 64 DS-Lite subscribers will be mapped to the same outside IP address. Consequently the prefix length of those subscribers must be reduced by 6 bits for hashing purposes (so that chunks of 64 subscribers are always hashed to the same ISA).

According to our rule, the prefix of those subscribers (subscriber-prefix-length) can be only in the range of [38..64], and no longer in the range [32..64, 128].

- dslite-max-subscriber-limit = 1 > n=0 [log2(1) = 0]

This means that each DS-lite subscriber will be mapped to its own outside IPv4 address. Consequently there is no need for the aggregation of the subscribers for hashing purposes, since each DS-lite subscriber is mapped to an entire outside IPv4 address (with all ports). Since the subscriber prefix length will not be contracted in this case, the prefix length can be configured in the range [32..64, 128].

In other words the largest configured prefix length for the deterministic DS-lite subscriber will be 32+n, where n = log2(dslite-max-subscriber-limit). The subscriber prefix length can extend up to 64 bits. Beyond 64 bits for the subscriber prefix length, there is only one value allowed: 128. In the case n must be 0, which means that the mapping between B4 elements (or IPv6 address) and the IPv4 outside addresses is in 1:1 ratio (no sharing of outside IPv4 addresses).

The dependency between the subscriber definition in DS-Lite (based on the subscriber-prefix-length) and the subscriber hashing mechanism on ingress (based on the dslite-max-subscriber-limit value), will influence the order in which deterministic DS-lite is configured.

Order of Configuration Steps in Deterministic DS-Lite

Configure deterministic DS-Lite in the following order.

- Configure DS-lite subscriber-prefix-length

- Configure dslite-max-subscriber-limit

- Configure deterministic prefix (using a nat-policy)

- Optionally configure map statements under the prefix

- Configure DS-lite AFTR endpoints

- Enable (no shutdown) DS-lite node

Modifying the dslite-max-subscriber-limit requires that all nat-policies be removed from the inside routing context.

To migrate a non-deterministic DS-Lite configuration to a deterministic DS-Lite configuration, the non-deterministic DS-Lite configuration must be first removed from the system. The following steps should be followed:

- Shutdown DS-lite node

- Remove DS-lite AFTR endpoints

- Remove global nat-policy

- Configure/modify DS-lite subscriber-prefix-length

- Configure dslite-max-subscriber-limit

- Reconfigure global nat-policy

- Configure deterministic prefix

- Optionally configure a manual map statement(s) under the prefix

- Reconfigure DS-lite AFTR endpoints

- Enable (no shutdown) DS-lite node

- Configuration Restrictions in Deterministic NAT

NAT Pool

- To modify nat pool parameters, the nat pool must be in a shutdown state.

- Shutting down the nat pool by configuration (shutdown command) is not allowed in case that any nat-policy referencing this pool is active. In other words, all configured prefixes referencing the pool via the nat-policy must be deleted system-wide before the pool can be shut down. Once the pool is enabled again, all prefixes referencing this pool (with the nat-policy) will have to be recreated. For a large number of prefixes, this can be performed with an offline configuration file executed using the exec command.

NAT Policy

- All NAT policies (deterministic and non-deterministic) in the same inside routing-instance must point to the same nat-group.

- A nat-policy (be it a global or in a deterministic prefix) must be configured before one can configure an AFTR endpoint.

NA Group

- The active-mda-limit in a nat-group cannot be modified as long as a deterministic prefix using that NAT group exists in the configuration (even if that prefix is shutdown). In other words, all deterministic prefixes referencing (with the nat-policy) any pool in that nat-group, must be removed.

Deterministic Mappings (prefix ans map statements)

- Non-deterministic policy must be removed before adding deterministic mappings.

- Modifying, adding or deleting prefix and map statements in deterministic DS-Lite require that the corresponding nat pool is enabled (in no-shutdown state).

- Removing an existing prefix statement requires that the prefix node is in a shutdown state.

Similarly, the map statements can be added or removed only if the prefix node is in a shutdown state.

- There are a few rules governing the configuration of the map statement:

- If the number of subscribers per configured prefix is greater than the subscriber-limit per outside IP parameter (2^n), then the lowest n bits of the map start <inside-ip-address> must be set to 0.

- If the number of subscribers per configured prefix is equal or less than the subscriber-limit per outside IP parameter (2^n), then only one map command for this prefix is allowed. In this case there is no restriction on the lower n bits of the map start <inside-ip-address>. The range of the inside IP addresses in such map statement represents the prefix itself.

The outside-ip-address in the map statements must be unique amongst all map statements referencing the same pool. In other words, two map statements cannot reference the same <outside-ip-address> in a pool.

Configuration Parameters

- The subscriber-limit in deterministic nat pool must be a power of 2.

- The nat inside classic-lsn-max-subscriber-limit must be power of 2 and at least as large as the largest subscriber-limit in any deterministic nat pool referenced by this routing instance. In order to change this parameter, all nat-policies in that inside routing instance must be removed.

- The nat inside ds-lite-max-subscriber-limit must be power of 2 and at least as large as the largest subscriber-limit in any deterministic nat pool referenced by this routing instance. In order to change this parameter, all nat-policies in that inside routing instance must be removed.

- In DS-lite, the [subscriber-prefix-length - log2(dslite-max-subscriber-limit)] value must fall within [32 ..64, 128].

- In Ds-Lite, the subscriber-prefix-length can be only modified if the DS-lite CLI node is in shutdown state and there are no deterministic DS-lite prefixes configured.

Miscellaneous

- Deterministic NAT is not supported in combination with 1:1 NAT. Therefore the nat pool cannot be in mode 1:1 when used as deterministic pool. Even if each subscriber is mapped to its own unique outside IP (sub-limit=1, det-port-reservation ports (65535-1023), NAPT (port translation) function is still performed.

- Wildcard port forwards (including PCP) will map to the wildcard port ranges and not the deterministic port range. Consequently logs will be generated for static port forwards using PCP.

Destination Based NAT (DNAT)

Destination NAT (DNAT) in SR OS is supported for LSN44 and L2-Aware NAT. DNAT can be used for traffic steering where the destination IP address of the packet is rewritten. In this fashion traffic can be redirected to an appliance or set of servers that are in control of the operator, without the need for a separate transport service (for example, PBR plus LSP). Applications utilizing traffic steering via DNAT normally require some form of inline traffic processing, such as inline content filtering (parental control, antivirus/spam, firewalling), video caching, etc.Once the destination IP address of the packet is translated, traffic is naturally routed based on the destination IP address lookup. DNAT will translate the destination IP address in the packet while leaving the original destination port untranslated.Similar to source based NAT (Source Network Address and Port Translation (SNAPT)), the SR OS node will maintain state of DNAT translations so that the source IP address in the return (downstream) packet is translated back to the original address. Traffic selection for DNAT processing in MS-ISA is performed via a NAT classifier.

Combination of SNAPT and DNAT

In certain cases SNAPT is required along with DNAT. In other cases only DNAT is required without SNAPT. The following table shows the supported combinations of SNAPT and DNAT in SR OS.

Table 31: Supported Combinations of SNAPT and DNAT

SNAPT | DNAT-Only | SNAPT + DNAT | |

LSN44 | X | X | X |

L2-Aware | X | X |

The SNAPT/DNAT address translations are shown in Figure 55.

Figure 55: IP Address/Port Translation Modes

Forwarding Model in DNAT

NAT forwarding in SR OS is implemented in two stages:

- Traffic is first directed towards the MS-ISA. This is performed via a routing lookup, via a filter or via a subscriber-management lookup (L2-aware NAT). DNAT does not introduce any changes to the steering logic responsible for directing traffic from the I/O line card towards the MS-ISA.

- Once traffic reaches the MS-ISA, translation logic is performed. DNAT functionality will incur an additional lookup in the MS-ISA. This lookup is based on the protocol type and the destination port of the packets, as defined in the nat-classifier.

As part of the NAT state maintenance, the SR OS maintains the following fields for each DNATed flow:

<inside host /port, outside IP/port, foreign IP address/port, destination IP address/port, protocol (TCP,TCP,ICMP)> Note that the inside host in LSN is inside the IP address and in L2-Aware NAT it is the <inside IP address + subscriber-index>. The subscriber index is carried in session-id of the L2TP.

The foreign IP address represents the destination IP address in the original packet, while the destination IP address represents the DNAT address (translated destination IP address).

DNAT Traffic Selection via NAT Classifier

Traffic intended for DNAT processing is selected via a nat classifier. The nat classifier has configurable protocol and destination ports. The inclusion of the classifier in the nat-policy is the trigger for performing DNAT. The configuration of the nat classifier determines whether:

- a specific traffic defined in the match criteria is DNATed while the rest of the traffic is transparently passed through the nat classifier, OR

- a specific traffic defined in the match criteria is transparently passed through the nat classifier while the rest of the traffic is DNATed.

Classifier cannot drop traffic (no action drop). However, a non-reachable destination IP address in DNAT will cause traffic to be black-holed.

Configuring DNAT

DNAT is enabled in the config>service>nat>nat-policy context.

DNAT function is triggered by the presence of the nat classifier (nat-classifier command), referenced in the nat-policy.DNAT-only option is configured in case where SNAPT is not required. This command is necessary in order to determine the outside routing context and the nat-group when SNAPT is not configured. Pool (relevant to SNAPT) and DNAT-only configuration options within the nat-policy are mutually exclusive.

DNAT Traffic Selection and Destination IP Address Configuration

DNAT traffic selection is performed via a nat-classifier. Nat-classifier is defined under config>service>nat hierarchy and is referenced within the nat-policy.

default-dnat-ip-address is used in all match criteria that contain DNAT action without specific destination IP address. However, the default-dnat-ip-address is ignored in cases where IP address is explicitly configured as part of the action within the match criteria.

default-action is applied to all packets that do not satisfy any match criteria.

forward (forwarding action) has no effect on the packets and will transparently forward packets through the nat-classifier. By default, packets that do not match any matching criteria are transparently passed through the classifier.

Micro-Netting Original Source (Inside) IP Space in DNAT-Only Case

In order to forward upstream and downstream traffic for the same NAT binding to the same MS-ISA, the original source IP address space must be known in advance and consequently hashed on the inside ingress towards the MS-ISAs and micro-netted on the outside.This will be performed with the following CLI:

The classic-lsn-max-subscriber-limit parameter was introduced by deterministic NAT and it is reused here. This parameter affects the distribution of the traffic across multiple MS-ISA in the upstream direction traffic. Hashing mechanism based on source IPv4 addresses/prefixes is used to distribute incoming traffic on the inside (private side) across the MS-ISAs. Hashing based on the entire IPv4 address will produce the most granular traffic distribution, while hashing based on the IPv4 prefix (determined by prefix length) will produce less granular hashing. For further details about this command, consult the CLI command description. The source IP prefix is defined in the nat-prefix-list and then applied under the DNAT-only node in the inside routing context. This will instruct the SR OS node to create micro-nets in the outside routing context. The number of routes installed in this fashion is limited by the following configuration:

The configurable range is 1-128K with the default value of 32K.DNAT provisioning concept is shown in Figure 56.

Figure 56: DNAT Provisioning Model

NAT – Multiple NAT Policies per Inside Routing Context

Restrictions

The following restrictions apply to multiple NAT policies per inside routing context

- There is no support for L2-aware NAT.

- DS-Lite and NAT64 diversion to NAT is supported only through IPv6 filters.

- A maximum of 8 different NAT policies per inside routing context are supported. For routing based NAT diversion, this limit is enforced during the configuration of the NAT policies within the inside routing context. In case of a filter-based NAT diversion, the filter instantiation will fail if the number of different nat-policies per inside routing context exceeds 8.

- The default NAT policy is counted towards this limit (8).

Multiple NAT Policies Per Inside Routing Context

The selection of the NAT pool and the outside routing context is performed through the NAT policy. Multiple NAT policies can be used within an inside routing context. This feature effectively allows selective mapping of the incoming traffic within an inside routing context to different NAT pools (with different mapping properties, such as port-block size, subscriber-limit per pool, address-range, port-forwarding-range, deterministic vs non-deterministic behavior, port-block watermarks, etc.) and to different outside routing contexts. NAT policies can be configured:

- via filters as part of the action nat command.

- via routing with the destination-prefix command within the inside routing context

The concept of the NAT pool selection mechanism based on the destination of the traffic via routing is shown in Figure 57.

Figure 57: Pool Selection Based on Traffic Destination

Diversion of the traffic to NAT based on the source of the traffic is shown in Figure 58.

Only filter-based diversion solution is supported for this case. The filter-based solution can be extended to a 5 tuple matching criteria.

Figure 58: NAT Pool Selection Based on the Inside Source IP Address

The following considerations must be taken into account when deploying multiple NAT policies per inside routing context:

- The inside IP address can be mapped into multiple outside IP addresses based on the traffic destination. The relationship between the inside IP and the outside IP is 1:N.

- In case where the source IP address is selected as a matching criteria for a NAT policy (or pool) selection, the inside IP address will always stay mapped to the same outside IP address (relationship between the inside IP and outside IP address is, in this case, 1:1)

- Static Port Forwards (SPF) — Each SPF can be created only in one pool. This means that the pool (or NAT policy) must be an input parameter for SPF creation.

Routing Approach for NAT Diversion

The routing approach relies on upstream traffic being directed (or diverted) to the NAT function based on the destination-prefix command in the configure>service>vprn/router>nat>inside CLI context. In other words, the upstream traffic will be NATed only if it matches a preconfigured destination IP prefix. The destination-prefix command creates a static route in the routing table of the inside routing context. This static route will divert all traffic with the destination IP address that matches the created entry, towards the MS-ISA. The NAT function itself will be performed once the traffic is in the proper context in the MS-ISA.

The CLI for multiple NAT policies per inside routing context with routing based diversion to NAT is the following:

or, for example: